Seeing today’s latest round of “AI layoffs” discourse reminded me of something uncomfortable that I think a lot of people in tech already know, but are hesitant to say out loud.

These layoffs are not really about AI replacing workers directly.

But they are still because of AI.

That distinction matters.

A lot of ink has been spilled debating whether the current layoff wave is genuinely driven by AI productivity, or whether companies are simply “AI-washing” ordinary cost cutting. You can find essays arguing both sides. One side claims AI is fundamentally transforming software development and knowledge work.

The other points out that if AI is truly delivering 5x productivity gains, why do products look mostly the same? Why are revenues not exploding upward? Why do organizations still move slowly?

Both sides are partially right.

Because the real story is not that AI suddenly replaced 30% of employees overnight. It clearly has not. Most companies are nowhere close to operating autonomously through AI agents. Most workflows are still deeply human, deeply organizational, and deeply messy.

But something equally important did happen. AI changed the economics and speed of execution faster than organizations could adapt to it.

And that mismatch is now destabilizing companies from the inside.

The easiest way to understand this is through a boring but useful framework every management consultant eventually rediscovers and puts into a PowerPoint slide:

Input. Output. Outcome. Code is input. Features are output. Revenue, retention, usage, customer satisfaction — those are outcomes.

For years, engineering organizations operated under a very important constraint: writing software was expensive and slow.

The CEO had 150 ideas. Product wanted to test all of them. Engineering said: “We only have bandwidth for one.”

That constraint was frustrating, but healthy. Because it forced prioritization. It forced argument. It forced teams to kill bad ideas before they consumed too many resources.

Then generative AI arrived.

Now suddenly:

- MVPs appear in days.

- Internal tools get built overnight.

- Pull requests explode.

- Individual engineers generate dramatically more code.

- Teams can prototype five directions simultaneously instead of debating one.

At first this feels magical. And in many ways, it is. But then something strange happens. The amount of code increases dramatically. The amount of actual business outcome often does not.

And this is where both AI evangelists and AI skeptics start talking past each other.

The evangelists point at the explosion in output. They are not hallucinating that. It is real. Anyone working inside a modern tech company can see it. AI usage is everywhere now. Even conservative companies that try to restrict AI adoption still have employees quietly using ChatGPT, Gemini, Claude, Cursor, Copilot, or some internal equivalent.

Meanwhile, forward-leaning companies are consuming AI tokens at astonishing rates. Entire engineering teams now operate with AI copilots open all day. Code generation volume has exploded. Internal tooling velocity has exploded. Experiments that once took weeks now take hours.

The skeptics then ask the obvious question:

“If all this productivity is real, where are the corresponding outcomes?”

- Why are the products not radically better?

- Why are revenues not scaling proportionally?

- Why do users barely notice the difference?

That is the correct question.

Because AI dramatically accelerated inputs without automatically improving judgment.

And once that happened, organizations discovered something uncomfortable:

Coding was never the only bottleneck. In many companies, it was not even the primary bottleneck anymore. Alignment was. Decision-making was. Prioritization was. Organizational coherence was. The ability to distinguish two good ideas from eight bad ones was.

For years, engineering scarcity hid these problems.

When code was expensive, organizations were forced to compress decision-making. They had to debate priorities carefully because implementation carried real cost. Bad ideas died early because nobody wanted to waste six months building them.

Now implementation is cheap.

So the filtering mechanisms weaken.

Instead of debating whether something should exist, teams often just build it because they can.

And this creates a second-order organizational problem that I think many executives are only now beginning to realize.

AI does not just accelerate execution.

It accelerates divergence.

Two teams receive loosely aligned objectives and independently build different solutions overnight based on different assumptions. Product alignment that once happened before implementation now happens after implementation — when conflicting prototypes already exist.

Except nobody really wants to slow down and align properly anymore.

Because once people get used to infinite AI-assisted execution capacity, every disagreement feels solvable through “just building another version.”

So instead of reducing organizational chaos, AI often amplifies it.

Everyone becomes locally productive while the organization becomes globally incoherent.

This is the part most discussions about AI productivity completely miss.

The bottleneck shifted.

We thought software engineering was the limiting factor.

Turns out organizational coordination was.

And when the original bottleneck disappears overnight, all the hidden inefficiencies downstream become painfully visible.

You suddenly notice:

- overlapping roadmaps,

- duplicate tooling,

- stakeholder conflicts,

- management layers,

- endless meetings,

- redundant approvals,

- teams blocking teams,

- political coordination disguised as process.

The faster coding becomes, the more expensive organizational friction becomes.

That is one side of the story.

The other side is simpler.

AI is expensive.

Not philosophically expensive. Literally expensive.

If your engineers are consuming tens of thousands of dollars per year in AI tokens, inference, compute, and tooling costs, that spend has to come from somewhere.

And right now, most companies are not seeing proportional revenue expansion from that spend.

That matters.

Because businesses ultimately run on unit economics, not technological excitement.

If your input costs rise 30%, but your outcomes barely move, something must rebalance.

Companies can tolerate this temporarily while chasing strategic advantage. But eventually the finance organization starts asking obvious questions:

- Why is infrastructure spend exploding?

- Why is AI tooling spend exploding?

- Why are engineering costs rising?

- Why are we generating dramatically more output without equivalent business results?

And once those questions start getting asked seriously, layoffs become mathematically predictable.

Not because AI replaced the employee one-for-one. But because AI changed the company’s cost structure before it changed the company’s outcomes.

This is why calling these layoffs “AI-washing” is incomplete. Yes, many companies already had structural issues:

- overhiring,

- bloated middle management,

- slowing growth,

- declining margins,

- weak product differentiation,

- post-ZIRP expansion hangovers.

All true.

But AI still matters profoundly here because it changed executive expectations around what level of organizational efficiency should now be possible.

And there is another uncomfortable truth underneath all of this. Large organizations contain slack by design.

That redundancy is not accidental. It creates resilience. It allows institutional continuity. It allows people to leave, switch teams, take parental leave, or disappear without the company collapsing instantly.

But that same redundancy also means many large companies can remove 10–20% of staff and continue functioning in the short term with surprisingly little disruption.

Sometimes they even move faster temporarily.

Fewer stakeholders. Fewer alignment loops. Fewer competing priorities. Fewer organizational veto points.

Again, this does not mean layoffs are universally good or strategically wise long term. Many companies will absolutely cut too deep and damage themselves. Some are already doing so.

But from the perspective of executives staring at exploding AI budgets and organizational coordination problems, the logic behind these layoffs is not irrational.

They are trying to rebalance the organization around a new execution reality. Whether they actually know how to operate effectively in that new reality is a separate question.

And that, ultimately, is the real story.

The biggest misconception in the current AI debate is the assumption that AI productivity automatically translates into business productivity.

It does not. AI massively increases the capacity to generate inputs. But companies still need systems that convert inputs into outcomes.

They still need judgment. They still need prioritization. They still need coherent product strategy. They still need organizational alignment. They still need actual understanding of customer problems.

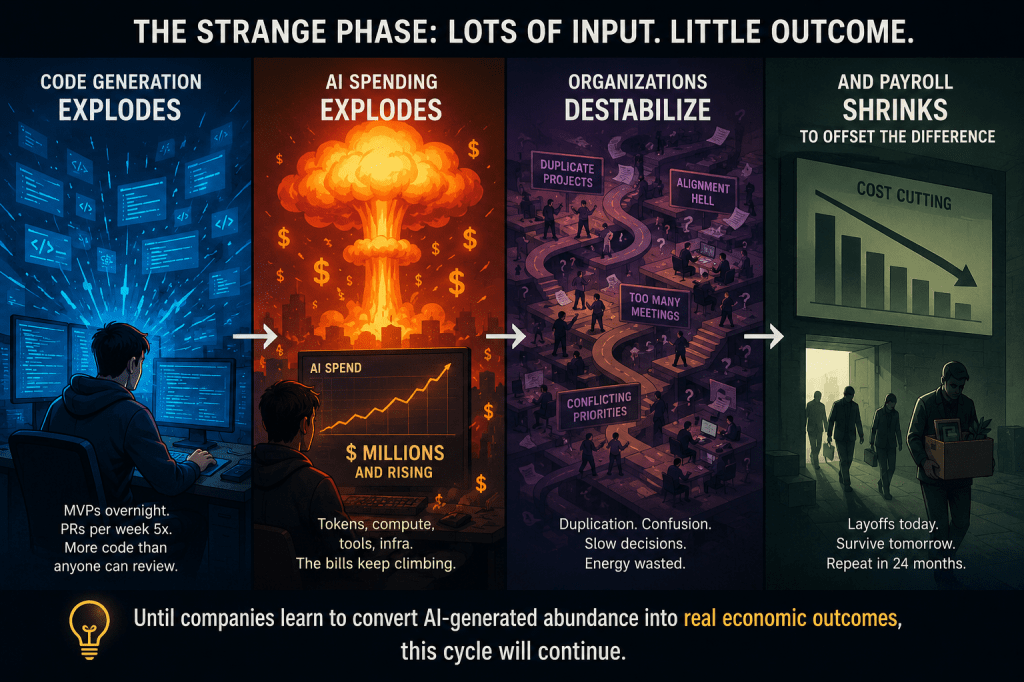

And until companies learn how to translate AI-generated abundance into real economic outcomes, we are going to continue seeing this strange phase where:

- code generation explodes,

- AI spending explodes,

- organizations destabilize,

- and payroll shrinks to offset the difference.

These layoffs are not happening because AI has fully replaced humans.

They are happening because AI changed the economics, expectations, and operating tempo of companies faster than companies learned how to adapt.

AI accelerated execution. Human organizations did not accelerate coordination at the same speed. And that gap is where the layoffs are coming from.

Discover more from Mukund Mohan

Subscribe to get the latest posts sent to your email.