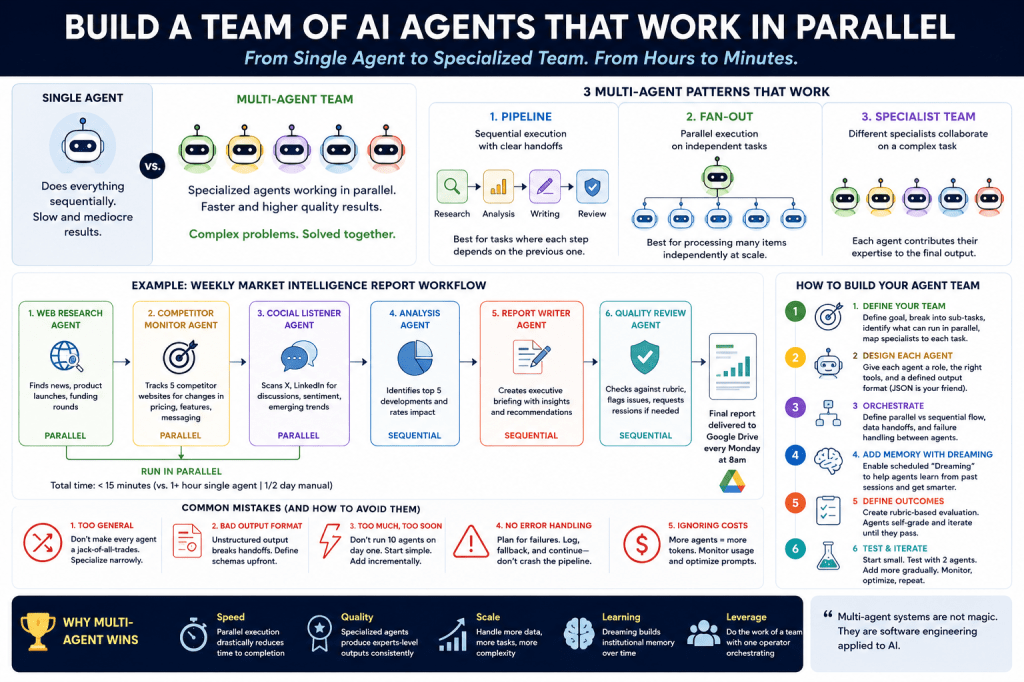

A single AI agent is useful. A team of AI agents working together is a completely different category of software.

Most people still think about AI agents the wrong way. They imagine a single super-agent that can research, write, code, analyze, review, and execute tasks end-to-end. That model works for demos. It breaks under real production complexity.

What is emerging instead is something much closer to organizational design.

One agent researches. Another analyzes. Another validates. Another writes. Another reviews. Another orchestrates the entire workflow.

This is the architecture Anthropic pushed further on May 6th, 2026, when they announced multi-agent orchestration for Claude Managed Agents at their Code with Claude event. Developers can now coordinate up to 20 specialized agents running in parallel on a single task.

That detail matters: parallel. Not sequentially waiting on each other. Simultaneously executing specialized work streams.

This is not experimental anymore. Companies like Netflix, Shopify, and Harvey are already deploying multi-agent systems in production. Netflix uses coordinated agents to analyze massive build logs simultaneously. Harvey uses them across legal research, drafting, and compliance workflows. Shopify has publicly discussed aggressive autonomous coding targets internally.

The important thing is not the tooling announcement itself. The important thing is the architectural shift underneath it.

The future of AI systems is not one bigger model doing everything. The future is coordinated systems of specialized agents working together.

The easiest way to understand this is to stop thinking about prompts and start thinking about teams.

A single agent behaves like a single employee. Even if the employee is exceptional, they can only execute one cognitive thread at a time. Research first. Analysis second. Writing third. Review fourth.

A multi-agent system behaves like an actual organization. Specialized workers execute simultaneously within defined scopes and communicate through structured interfaces.

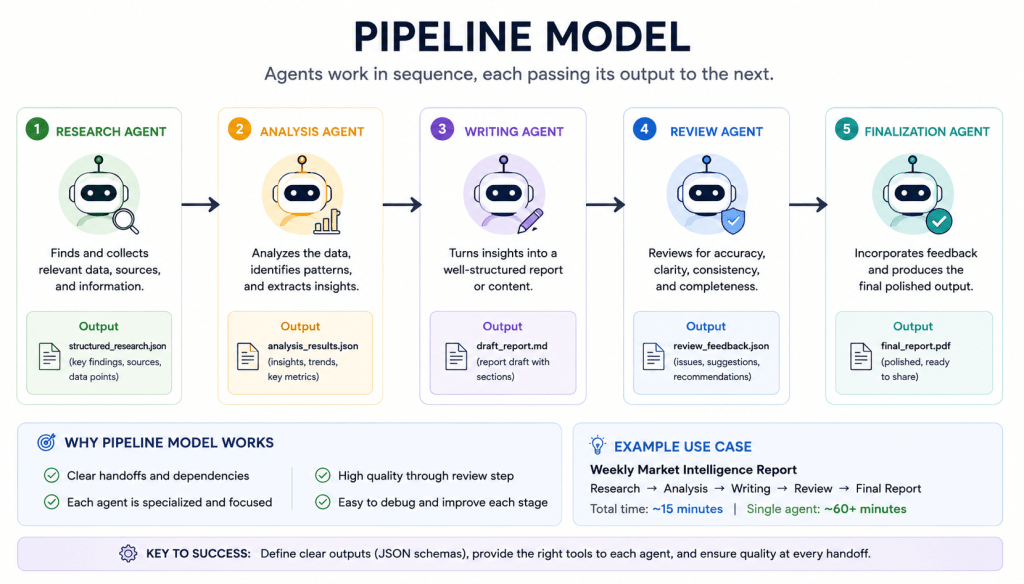

The first practical implementation pattern is the pipeline model.

In a pipeline, agents execute sequentially with tightly scoped responsibilities. A research agent gathers source material. An analysis agent identifies patterns and extracts insights. A writing agent turns structured findings into readable output. A review agent checks for quality, consistency, hallucinations, and missing information.

This works extremely well for predictable workflows where downstream steps depend on upstream outputs. Most content operations, compliance reviews, report generation systems, and internal research pipelines fit this model.

The second pattern is fan-out orchestration. This is where multi-agent systems become dramatically more powerful than single-agent systems.

A commander agent decomposes a large task into independent subtasks and distributes them to worker agents running in parallel. One agent analyzes Document A. Another analyzes Document B. Another processes customer sentiment. Another reviews pricing changes. Another scans recent industry news.

All of them run simultaneously.

The commander then synthesizes the outputs into a unified result.

This is the architecture that starts compressing hours of work into minutes. More importantly, it scales cognitive throughput horizontally rather than vertically. Instead of asking one overloaded agent to reason through everything, you distribute reasoning across specialized workers.

The third pattern is the specialist team model. This is the most organizationally interesting structure because it mirrors how high-functioning companies already operate.

Imagine a product launch workflow.

One agent analyzes market positioning and competitors. Another evaluates technical feasibility. Another models pricing scenarios and unit economics. Another writes launch copy and landing pages. Another performs consistency and quality review.

Each agent stays inside its expertise boundary.

That boundary discipline is critical.

Most failed agent systems fail because every agent is too general. People accidentally recreate a single-agent architecture distributed across multiple poorly defined agents. The result is redundancy, confusion, token waste, and degraded outputs.

Specialization is the entire point.

If you want to build a real multi-agent system, the first step is not writing prompts. It is workflow decomposition.

Define the overall business objective first.

For example:

“Generate a weekly competitive intelligence briefing for our executive team.”

Then break the work into distinct cognitive functions: competitor monitoring, pricing analysis, social listening, market research, synthesis, writing, and quality review.

Then determine which tasks can run independently and which require upstream dependencies.

This matters because orchestration logic becomes the core infrastructure layer.

Once the workflow is decomposed, each agent needs three things:

a narrowly defined role, access to the correct tools, and a structured output format.

The output format is the part most builders underestimate.

Agents communicate through interfaces exactly like software systems do. If one agent outputs vague prose while another expects structured JSON, the handoff breaks immediately.

The highest leverage decision in multi-agent design is standardizing data contracts between agents. Define explicit schemas: required fields, acceptable formats, fallback values, error handling behavior. Treat agent communication like API design, not conversation. Then comes orchestration.

Some agents run in parallel. Some run sequentially. Some aggregate outputs. Some validate outputs. Some retry failures. What matters is deterministic coordination.

The orchestration layer increasingly becomes more important than the model itself. This is where the market is heading: not toward “best model wins,” but toward “best operational AI system wins.”

That includes: memory systems, evaluation frameworks, workflow coordination, permissioning, tool integration, auditability, and institutional learning.

One of the most important recent developments here is persistent agent memory.

Anthropic’s “Dreaming” feature is effectively organizational memory for AI systems. Agents review historical sessions, extract patterns, identify mistakes, and curate memory stores between runs. That sounds abstract until you realize what it means operationally.

Your agent team accumulates institutional knowledge over time. It learns what good outputs look like. It remembers prior failures. It refines internal heuristics. It adapts to organizational preferences. This is much closer to onboarding and organizational learning than prompting.

The companies that understand this early will build enormous advantages because most competitors are still focused on prompt engineering while the frontier is shifting toward system design.

The other major shift is evaluation infrastructure. High-performing multi-agent systems do not rely on blind trust. They define explicit rubrics for success. A report must contain data from all five competitors. An analysis must contain at least three non-obvious insights. A summary must remain under a defined token limit. A coding agent must pass unit tests before deployment.

This creates closed-loop quality systems instead of one-shot generation.

The future AI stack increasingly looks like this: models at the bottom, orchestration above them, memory above orchestration, evaluation above memory, and organizational workflows wrapped around the entire system.

This is why multi-agent systems matter so much. They are not merely faster chatbots. They are the first real operational layer for AI-native organizations. And the implications are enormous.

A six-person research workflow becomes one operator coordinating specialized agents. A consulting deliverable that took two days becomes a 20-minute orchestrated pipeline. An agency becomes less about labor accumulation and more about workflow architecture.

The people who learn these patterns now are not just learning “AI tools.” They are learning how the next generation of software organizations will operate. That is the real shift underway.

Discover more from Mukund Mohan

Subscribe to get the latest posts sent to your email.