Most people still use AI like it is a chatbot.

That framing is already obsolete. AI is not becoming a feature. It is becoming a complete industrial stack, with its own infrastructure layers, operating systems, orchestration frameworks, application ecosystems, and economic gravity.

What cloud computing did to enterprise infrastructure over the last twenty years, AI is about to do to every layer of software, decision making, and digital labor. The mistake many investors, founders, and operators make is viewing AI through only one layer of the stack.

Some focus entirely on models. Some focus entirely on applications. Some obsess over GPUs. Others think agents alone are the future.

In reality, AI is becoming a vertically integrated system where every layer compounds the value of the layer above it. That is what makes this moment structurally important.

The modern AI economy is not one market. It is an interconnected stack.

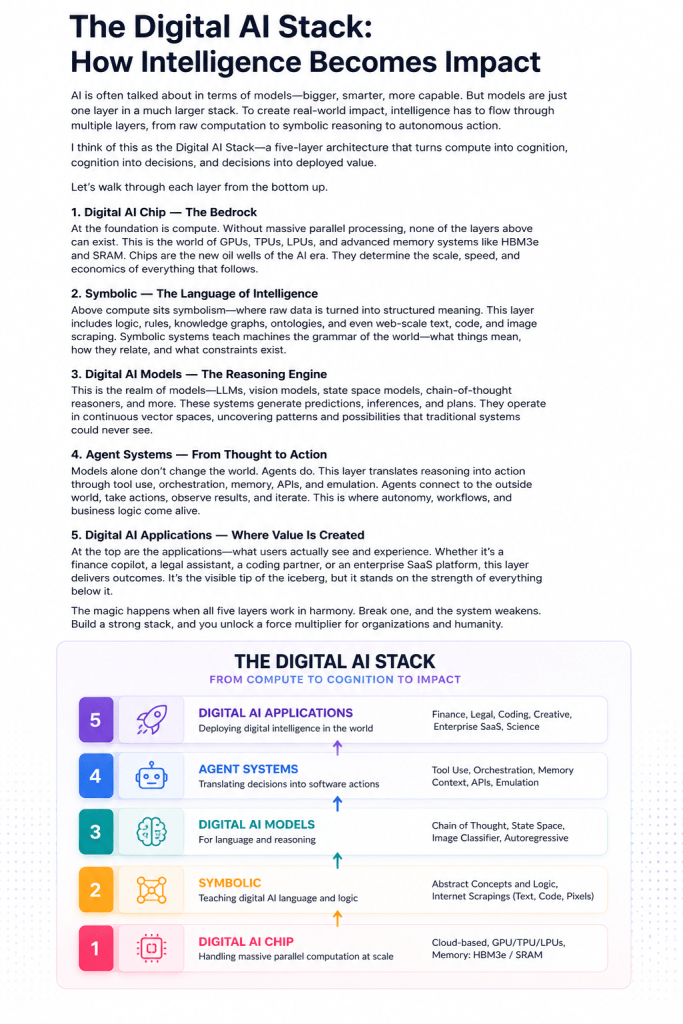

Layer 1: The Digital AI Chip

At the bottom of the stack sits compute.

This is the industrial foundation powering everything above it. Without massive parallel computation, none of the modern AI ecosystem exists.

For years, most people thought CPUs were the center of computing. AI changed that assumption completely. Training and inference workloads massively reward parallelism, memory bandwidth, and specialized tensor operations. That shifted power toward GPUs, TPUs, LPUs, and custom accelerators. That’s Cerebras.

This is why companies like NVIDIA became some of the most strategically important companies in the world. The market is not simply paying for chips. It is paying for the ability to manufacture intelligence at scale. And increasingly, memory architecture matters just as much as raw compute.

HBM3e, SRAM optimization, interconnect bandwidth, power efficiency, and distributed cluster orchestration are becoming critical competitive layers because modern AI systems are fundamentally constrained by data movement. That’s Micron, SK Hynix and Sandisk (to a lesser extent)

The dirty secret of AI infrastructure is that intelligence is often bottlenecked not by reasoning capability, but by how fast systems can move memory through the stack. This is also why the AI race is becoming geopolitical. Countries are realizing that compute capacity is not merely technology infrastructure. It is economic leverage.

The cloud providers understood this early.

Amazon AWS, Microsoft Azure and Google Cloud are intelligence utilities.

Layer 2: Symbolic Systems

Above the hardware sits symbolic representation. AI models are not magical. They are prediction systems trained on symbolic abstractions of reality.

Text. Code. Images. Audio. Relationships. Logic.

The internet became the largest symbolic dataset ever created by humanity.

Every GitHub repository, Wikipedia article, Stack Overflow answer, scientific paper, Reddit discussion, legal contract, customer support transcript, and YouTube subtitle became training material for machine intelligence. This layer matters because intelligence requires abstraction.

A model cannot reason about concepts unless reality has first been translated into symbolic structures.

This is why data quality matters so much.

Garbage symbolic systems create garbage reasoning systems.

It also explains why enterprises are suddenly obsessed with knowledge graphs, embeddings, retrieval systems, vector databases, and internal context pipelines. The next wave of enterprise AI will not merely be powered by public internet knowledge. It will be powered by proprietary symbolic context unique to each organization. The institutional memory of a company becomes machine-readable. That changes the economics of expertise.

Layer 3: Digital AI Models

The model layer is where symbolic understanding becomes usable intelligence.

Large language models fundamentally changed software because they introduced probabilistic reasoning into mainstream computing. Traditional software systems required deterministic instructions.

AI models infer. That sounds subtle. It is not.

It changes the philosophy of software entirely.

Instead of explicitly programming every workflow, humans increasingly specify intent while models generate outputs dynamically. This is why concepts like chain-of-thought reasoning, multimodal understanding, autoregressive generation, and state-space modeling matter. The software industry is shifting from handcrafted logic toward statistical cognition.

But the important observation is this:

Models alone are rapidly commoditizing. The frontier models remain expensive and strategically important, but raw intelligence increasingly behaves like infrastructure. This is similar to what happened with cloud computing.

At first, infrastructure itself captured extraordinary value.

Over time, differentiation migrated upward into orchestration, workflow design, distribution, and vertical integration. The same thing is happening to models. OpenAI and Anthropic (and Xai to a small extent and Meta) are here.

Which leads to the next layer.

Layer 4: Agent Systems

This is where the stack becomes operational.

Models generate intelligence. Agents translate intelligence into action.

That distinction matters enormously.

A chatbot answering questions is not transformative. An agent autonomously orchestrating software systems is.

Agents introduce:

Tool usage. Workflow execution. Memory. API interaction. Reasoning loops. Task decomposition. Context retrieval. Software emulation.

This is the layer where AI stops being conversational and starts becoming operational. Most people underestimate how large this market could become. Because the economic value is not in generating text.

The value is in reducing human coordination overhead.

Most enterprise inefficiency is not caused by lack of intelligence.

It is caused by fragmented systems, manual orchestration, context switching, poor prioritization, and operational latency. Agents attack those problems directly. This is why companies are racing toward AI-native workflow systems.

The future enterprise software stack will increasingly consist of:

Systems of record. Systems of intelligence. Systems of execution.

And the agent layer becomes the orchestration fabric connecting all three. That is why every major software category is being rebuilt around agents:

Sales. Legal. Healthcare. Security. Finance. Engineering. Research. Customer support. Operations.

The software interface itself begins disappearing. The workflow becomes the product.

Layer 5: Digital AI Applications

At the top of the stack sit the visible applications.

This is the layer consumers interact with directly.

Coding copilots. AI legal assistants. Research systems. Creative generation tools. Scientific discovery engines. Enterprise copilots. Autonomous analysts.

But the application layer is deceptive. Because most AI applications are not truly standalone products. They are abstractions sitting on top of every layer beneath them. A modern AI application increasingly depends on:

Compute infrastructure. Memory systems. Foundation models. Context orchestration. Agent frameworks. Enterprise integrations. Feedback systems. Safety layers.

This is why the strongest AI companies are increasingly becoming vertically integrated.

Owning only the application layer becomes dangerous if someone else controls the models. Owning only the models becomes dangerous if someone else owns distribution. Owning only the infrastructure becomes dangerous if higher-level orchestration captures most of the economic value.

Every layer is attempting to move both upward and downward in the stack. That is the real AI war.

The Bigger Shift

The most important takeaway from this stack is that AI is not simply another software cycle.

It is the industrialization of cognition.

Previous software revolutions primarily automated storage, communication, and workflow digitization.

AI automates parts of reasoning itself. That changes the economics of labor. It changes organizational design. It changes how products are built. It changes how companies scale. It changes what knowledge work even means.

And unlike previous technology waves, every layer of this stack reinforces the others.

Better chips enable larger models. Better models enable more capable agents. Better agents enable richer applications. Better applications generate more data. More data improves symbolic understanding.

The system compounds. That is why this moment feels different. We are not merely watching a software boom. We are watching the construction of a new digital industrial stack for intelligence itself.

Discover more from Mukund Mohan

Subscribe to get the latest posts sent to your email.